🚀 Automating Scalable Infrastructure with Terraform & Ansible Dynamic Inventory

My name is Joseph Mbatchou, and I am grateful for the opportunity to introduce myself to you.

I have been in the Tech industry for about 8 years, and I am currently performing as Cloud Engineer at CloudSpace Consulting, LLC Manassas, VA.

My journey began as a Computer Science Teacher and Computer Technician at Biopharcam, a computer sales company in my home country. Upon relocating to the United States, I joined Allied Universal, gradually advancing from an officer to a shift lead position. In this role, I oversaw various applications for training and operational tasks, collaborating closely with engineers and developers from Digital Realty, a data center provider.

Observing these professionals at work sparked my interest in cloud computing, leading me to pursue cloud classes, attend boot camps, and conduct in-depth research across various domains, including training at CloudSpace Academy. This exposure deepened my passion for IT and motivated me to transition into the industry to further my personal growth and contribute to organizational success.

As a Cloud Consultant, I have been privileged to collaborate with Cloud Solution Architects and DevOps teams on numerous projects, consistently exceeding customer expectations through our dedication and innovative solutions. My tenure in this role has enriched my skill set and broadened my professional experience.

Now, equipped with a wealth of knowledge in cloud computing and DevOps practices, I am eager to apply my expertise to new challenges and opportunities. I am confident in my ability to contribute effectively to your knowledge

Thank you for considering my hard work. I look forward to have you on board of the learning process.

🚀 Automating Scalable Infrastructure with Terraform & Ansible Dynamic Inventory

🚀 Overview:

Modern infrastructure demands automation, scalability, and repeatability. Manual provisioning of cloud resources and configuration management quickly becomes error-prone and unmaintainable as environments grow.

This project demonstrates how to build a fully automated, production-grade infrastructure pipeline using Terraform for infrastructure provisioning and Ansible with dynamic AWS inventory for configuration management.

The solution provisions multiple EC2 instances across environments (dev, stage, prod), dynamically discovers them using AWS APIs, and configures them automatically — all without hardcoded IP addresses or manual intervention.

🔧 Problem Statement

Traditional infrastructure workflows often suffer from:

Hardcoded server IPs in configuration files

Manual SSH access and host inventory management

Inconsistent environments across dev, stage, and prod

Infrastructure drift due to manual changes

Difficulty scaling across availability zones and environments

Additionally, many teams struggle with:

Incorrect subnet placement

SSH connectivity failures due to networking misconfigurations

Poor separation between provisioning and configuration

This project solves these problems by combining Infrastructure as Code (IaC) with dynamic configuration orchestration.

💽 Techonology Stack

| Category | Tools |

| Infrastructure Provisioning | Terraform |

| Configuration Management | Ansible |

| Dynamic Inventory | Ansible AWS EC2 Plugin |

| Cloud Provider | AWS |

| Operating System | Ubuntu 22.04 LTS |

| Security | AWS Security Groups, IAM |

| Scripting | Bash |

| Version Control | Git & GitHub |

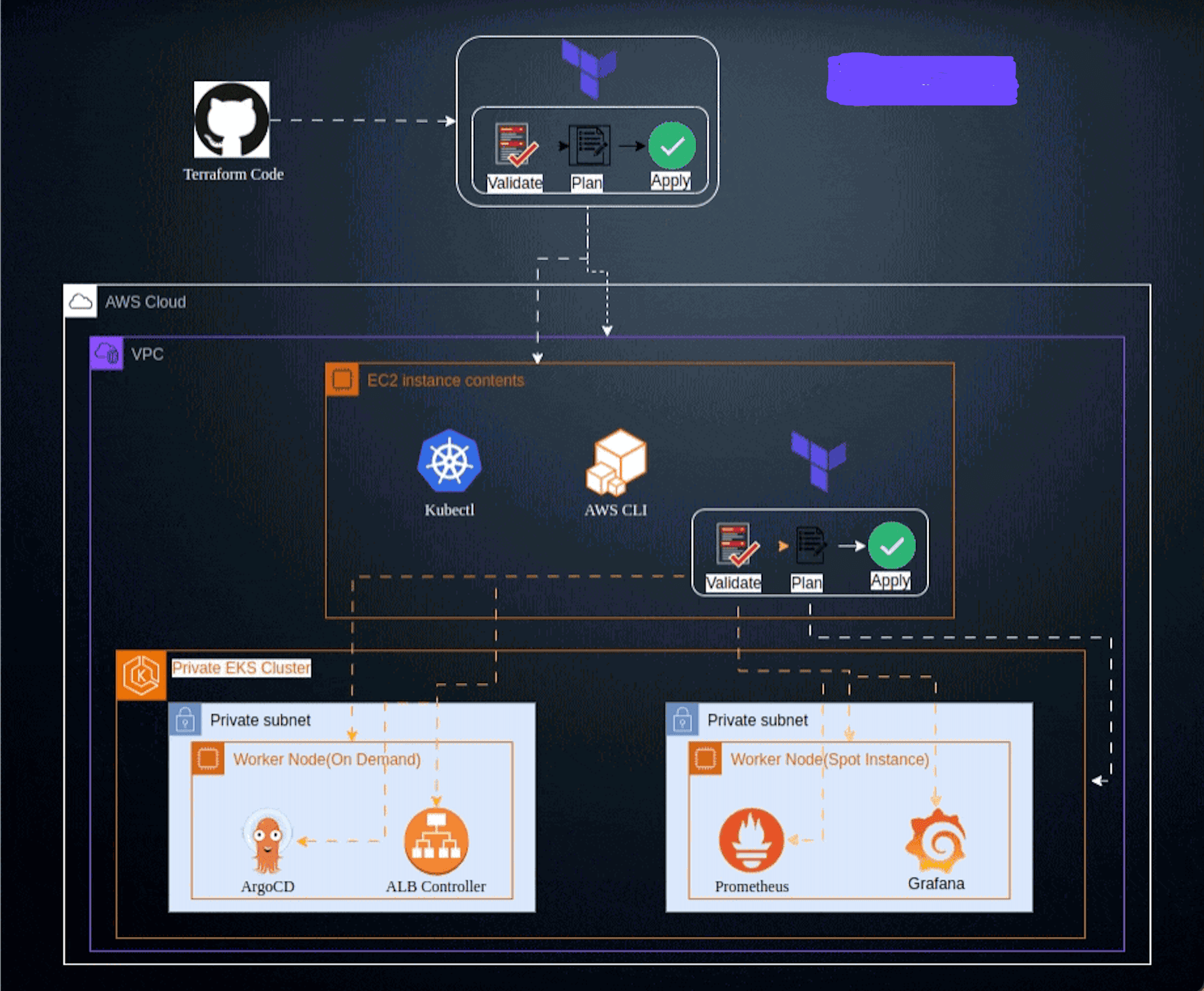

📌 Architecture Diagram

┌──────────────────┐

Local Machine (Deploy Scripts)

└─────────┬────────┘

▼

┌──────────────────────────┐

Terraform (IaC)

- VPC & Subnets (existing)

- EC2 Controller

- EC2 Application Nodes

- Security Groups

- SSH Key Pair │

└─────────┬────────────────┘

▼

┌──────────────────────────┐

AWS EC2 Instances

- - Dev / Stage / Prod

- Auto-assigned Public IP

└─────────┬────────────────┘

▼

┌──────────────────────────┐

Ansible Dynamic Inventory

- AWS API Discovery

- Tag-based Grouping

└─────────┬────────────────┘

▼

┌──────────────────────────┐

Ansible Playbooks

- Configure environments

- Apply roles

└──────────────────────────┘

Key Architectural Decisions

Dynamic inventory eliminates static host files

Tag-based grouping separates dev/stage/prod automatically

Terraform-generated SSH keypair ensures secure access

Public subnet detection via route tables prevents silent networking failures

Preflight validation ensures infrastructure readiness before configuration

🌟 Project Requirements

AWS account with permissions for EC2, VPC, IAM

Terraform ≥ 1.5

Ansible ≥ 2.15

AWS CLI configured (

aws configure)Bash shell

Git

📋 Table of Contents

I - Terraform Configuration files

Step 1: Provider Configuration

Step 2: Variables Configuration

Step 4: Iam role Configuration

II - Ansible files

Step 8: Ansible configuration file

III - Scripting files

IV - Instructions of Deployment

Step 18: Destroy Infrastructure

✨Terraform Configuration files

You need to write different files generating resources

Step 1: Provider Configuration

Here we declare our cloud provider and we specify the region where we will be launching resources

Step 2: Variables Configuration

This is where we declare all variables and their value. It includes the list of element that can vary or change. They can be reuse values throughout our code without repeating ourselves and help make the code dynamic

Step 3: Main Configuration

This is where you create the basement, foundation and networking where all the resources will be launch. It includes keypair, Security groups and EC2 instances,

Step 4: IAM Role Configuration

We define the temporary access to the instances via the role. it is a simple way of allow the controller to SSH via the others instances.

Step 5: Output Configuration

Know as Output Value : it is a convenient way to get useful information about your infrastructure printed on the CLI. It is showing all public IP of the instances.(Controller and nodes)

Step 6: User-data

user data is a script or custom data that you provide to an EC2 instance to be executed automatically on its first launch. It's used to automate the initial setup of an instance, such as installing software, configuring settings, or running commands needed to make the instance ready for use. In our case we will have two user data one for the controller where ansible and all dependencies will be install. The the one for the nodes where Python3 will be install.

✨Ansible files

You need to write different files generating resources

Step 7 : Inventory file

The Ansible AWS EC2 inventory plugin dynamically discovers EC2 instances directly from AWS using API calls instead of static host files. Instances are grouped automatically based on tags, regions, or environments, ensuring inventories stay accurate as infrastructure scales or changes.

Step 8: Ansible Configuration

The ansible.cfg file defines global Ansible behavior such as inventory location, SSH settings, privilege escalation, and retry behavior. It standardizes execution across environments and prevents inconsistencies caused by user-specific defaults.

Step 9: Playbook file

An Ansible playbook is a declarative YAML file that defines which tasks run on which hosts and in what order. It orchestrates configuration, package installation, and service management in a repeatable and idempotent manner.

Step 10: Requirements file

The requirements.yml file defines external Ansible roles or collections required by the project. This ensures consistent dependencies across environments and allows easy setup using a single installation command.

Step 11: Handlers file

Handlers are special Ansible tasks that run only when triggered by a change in another task. They are commonly used for operations like restarting services, ensuring actions occur only when necessary and avoiding unnecessary disruptions.

Step 12: Tasks file

This is where you create the basement, foundation and networking where all the resources will be launch. It includes keypair, Security groups and EC2 instances,

Step 13: Template files

The index.html template is a Jinja2-based HTML file dynamically rendered by Ansible during deployment. It allows environment-specific values (such as hostnames or environment names) to be injected into web content automatically. Deploys environment-specific index.html pages:

prod.html. → Portfolio UI with achievements, skills, certifications, socials, and image.

dev.html. → Minimal UI with a developer theme.

stage.html. → Modern preview UI.

✨ Scripting files

You need to write different bash script files generating and destroying resources. The file will contains all step by step meaning all terraform command and ansible management for the execution of the process.

Step 14: Deploy file

Here we declare the initialization of the folder, the validation of the configuration, the plan of resources to be create and apply of the execution where we will be launching resources, then we will SSH in the controller and pursuit the deployment of the index webpage in all 6 instances in three environment..

Step 15: Destroy file

This is where we declare the script that will allow terraform command to destroy all the resources created and all other dependencies and ansible to be destroy.

💼 Instructions of Deployment

Follow these steps to deploy the architecture:

Step 16: Clone Repository:

Clone the repository in your local machine using the command "git clone" then enter the folder

git clone https://github.com/Joebaho/Terra-Ansible-Dynamic.git

cd Terra-Ansible-Dynamic

Step 17: Run Deployment

For a one time deployment of the infrastructures and configuration of the application you must type both commands bellow. These would launch the process and get everything ready. Terraform and Ansible would apply the configuration and management of the webpages in each environments.

chmod +x deploy.sh

./deploy.sh

The process will execute all terraform command and display the following steps. You must see this image

Terraform init: Initialize the folder. Terraform fmt & validate: Check for any syntax error and valid the status of the configuration files

Terraform apply: confirm all resources created and Terraform output: show all the output information to be use by user.

After all infrastructure deploy, Ansible now take over and starting process by first via the aws ec2 plugin will collect the public Ip of all the instances.

Then ansible will run the playbook

Continue the play following each stage or environment

Playbook completed you will have the confirmation

Going back in the console we can see all instances created

By picking any public IP and paste that in a new window on the browser and the web page will display. As we had three environments we will have three webpage

Prod webpage

Dev Webpage

stage webpage

Step 18: Destroy infrastructure

After you are done with the infrastructure and you are ready to destroy you can run the command. First you make the file executable then you apply execution.

chmod +x destroy.sh

./destroy.sh

After typing or pasting the command, the process will destroy all infrastructures and configuration files. You will get bellow image as confirmation

📌 Learning Outcomes

Through this project, you will learn how to:

Build production-ready Terraform modules

Avoid common AWS networking pitfalls (public vs private subnets)

Use Ansible dynamic inventory with AWS

Eliminate static inventory files

Secure SSH access using Terraform-managed keys

Debug real-world infrastructure issues (timeouts, subnet routing)

Structure deploy/destroy workflows professionally

Create reusable DevOps portfolio projects

🔗 Resources

Terraform AWS Provider Documentation

https://registry.terraform.io/providers/hashicorp/aws/latest/docs

🤝 Contributing

Your perspective is valuable! Whether you see potential for improvement or appreciate what's already here, your contributions are welcomed and appreciated. Thank you for considering joining us in making this project even better. Feel free to follow me for updates on this project and others, and to explore opportunities for collaboration. Together, we can create something amazing!

Contributions are welcome 🚀

If you’d like to improve this project:

Fork the repository

Create a feature branch

Submit a pull request

Ideas for contributions:

Add private subnet + NAT architecture

Introduce Ansible roles

Add CI/CD validation

Extend to multi-region deployments

📄 License

This project is licensed under the JoebahoCloud License