Terraform: Mount S3 Bucket on EC2 (Ubuntu) using s3fs‑fuse

My name is Joseph Mbatchou, and I am grateful for the opportunity to introduce myself to you.

I have been in the Tech industry for about 8 years, and I am currently performing as Cloud Engineer at CloudSpace Consulting, LLC Manassas, VA.

My journey began as a Computer Science Teacher and Computer Technician at Biopharcam, a computer sales company in my home country. Upon relocating to the United States, I joined Allied Universal, gradually advancing from an officer to a shift lead position. In this role, I oversaw various applications for training and operational tasks, collaborating closely with engineers and developers from Digital Realty, a data center provider.

Observing these professionals at work sparked my interest in cloud computing, leading me to pursue cloud classes, attend boot camps, and conduct in-depth research across various domains, including training at CloudSpace Academy. This exposure deepened my passion for IT and motivated me to transition into the industry to further my personal growth and contribute to organizational success.

As a Cloud Consultant, I have been privileged to collaborate with Cloud Solution Architects and DevOps teams on numerous projects, consistently exceeding customer expectations through our dedication and innovative solutions. My tenure in this role has enriched my skill set and broadened my professional experience.

Now, equipped with a wealth of knowledge in cloud computing and DevOps practices, I am eager to apply my expertise to new challenges and opportunities. I am confident in my ability to contribute effectively to your knowledge

Thank you for considering my hard work. I look forward to have you on board of the learning process.

Introduction

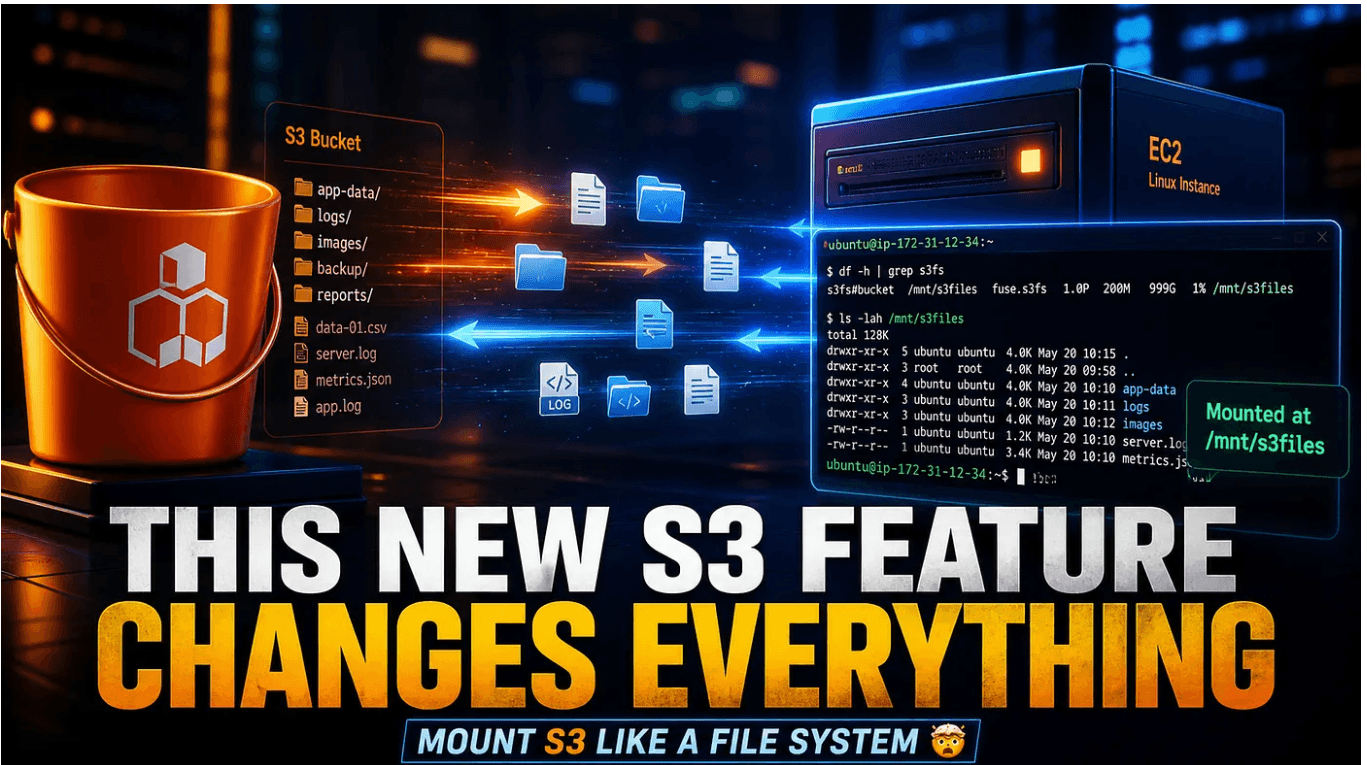

Amazon continues to blur the lines between object storage and traditional file systems. With the launch of Amazon S3 Files, working with S3 becomes far more intuitive for engineers who prefer file system semantics over API-driven operations. This project automates the creation of an S3 bucket, an EC2 instance (Ubuntu 22.04), an IAM role with S3 permissions, and the automatic mounting of the bucket as a local file system using s3fs‑fuse. It uses Terraform to provision the infrastructure and a user‑data script to configure the mount on the instance.

📖 Overview

When you need to access S3 objects as if they were local files (e.g., for legacy applications, log processing, or shared storage), s3fs‑fuse is a reliable solution. This repository provides a complete, production‑ready Terraform setup that:

Creates an S3 bucket with versioning enabled (optional) and public access blocked.

Launches an Ubuntu 22.04 LTS EC2 instance with a public IP.

Attaches an IAM role that grants read/write access to the bucket.

Installs s3fs‑fuse and mounts the bucket to /mnt/s3-bucket automatically on startup.

Adds an entry to /etc/fstab to persist the mount across reboots.

All resources are tagged, well‑structured, and easy to destroy when no longer needed.

🗂️ Project Structure

├── provider.tf # Terraform and AWS provider configuration

├── variables.tf # Input variables (bucket name, key name, region, etc.)

├── terraform.tfvars # (create yourself) – actual variable values

├── data.tf # Ubuntu 22.04 AMI lookup

├── s3.tf # S3 bucket, versioning, and public access block

├── iam.tf # IAM role, policy, and instance profile

├── security-groups.tf # Security group (SSH and HTTP access)

├── ec2.tf # EC2 instance + Elastic IP (optional)

├── userdata.sh # Script to install s3fs and mount the bucket

├── outputs.tf # Public IP, DNS, bucket name, mount point

└── README.md # This file 🧰 Prerequisites Terraform (≥ 1.0)

AWS CLI configured with credentials having permissions to create EC2, S3, IAM, and security groups.

An existing EC2 key pair in your AWS account (for SSH access).

Basic knowledge of AWS and Terraform.

⚙️ Setup & Deployment

Clone the repository

git clone https://github.com/joebaho2/S3-FILES-TF.git

cd S3-FILES-TF

Create terraform.tfvars

Create a file named terraform.tfvars with your specific values:

aws_region = "us-west-2"

bucket_name = "my-unique-bucket-name-12345" # must be globally unique

key_name = "your-existing-key-pair-name"

instance_type = "t2.micro" # free tier eligible

mount_point = "/mnt/s3-bucket" # customise if needed

Initialize Terraform

terraform init

Validate the quality and the configuration syntax of the code with commands

terraform fmt

terraform validate

Review the plan

terraform plan

Apply

terraform apply -auto-approve

Go back to console to see all resources created ec2 instance and s3 bucket

After completion, Terraform outputs the public IP, bucket name, and mount point.

Connect to the instance `

ssh -i your-key.pem ubuntu@$(terraform output -raw instance_public_ip)

Verify the mount

df -h | grep s3fs ls -la /mnt/s3-bucket

echo "Hello from EC2" | sudo tee /mnt/s3-bucket/test.txt

You can now read and write files -they are directly stored in S3

The previous command created a files test.txt and was sent directly to the S3 files system via cli

You can also upload file via the console and check status via cli

🧼 Clean Up

To prevent ongoing charges, destroy all resources.

terraform destroy -auto-approve

Note: The S3 bucket is configured with force_destroy = true. This will delete all objects and versions inside the bucket before removing the bucket itself. Use with caution – data cannot be recovered.

🛠️ Customisation

Variable Description Default

aws_region AWS region us-west-2

bucket_name Unique S3 bucket name (required)

key_name EC2 key pair name (required)

instance_type EC2 instance type t2.micro

mount_point Local directory for the mount -/mnt/s3-bucket

You can also adjust the security group ingress rules in security-groups.tf (e.g., restrict SSH to your IP).

🔧 Troubleshooting

The mount point is empty or the bucket is not mounted Check the user‑data script logs on the instance:

sudo cat /var/log/cloud-init-output.log Manually install s3fs and mount:

Manually install s3fs and mount

sudo apt update && sudo apt install -y s3fs sudo s3fs /mnt/s3-bucket -o allow_other,use_cache=/tmp,iam_role=auto

Verify the IAM role is attached to the instance (AWS Console → EC2 → Instance → IAM role = EC2S3AccessRole).

Terraform destroy fails because bucket is not empty . The s3.tf file includes force_destroy = true. If you removed it, manually empty the bucket first:

aws s3 rm s3:// --recursive

Permission denied when writing to mount point Ensure the ubuntu user has write permissions:

sudo chmod 777 /mnt/s3-bucket # not recommended for production

🤝 Contributing

Your perspective is valuable! Whether you see potential for improvement or appreciate what's already here, your contributions are welcomed and appreciated. Thank you for considering joining us in making this project even better. Feel free to follow me for updates on this project and others, and to explore opportunities for collaboration. Together, we can create something amazing!

📄 License

This project is licensed under the JoebahoCloud License