GitOps-Powered EKS: Automating Kubernetes Deployments with Terraform, ArgoCD, and Monitoring Tools accessible via Load balancer

My name is Joseph Mbatchou, and I am grateful for the opportunity to introduce myself to you.

I have been in the Tech industry for about 8 years, and I am currently performing as Cloud Engineer at CloudSpace Consulting, LLC Manassas, VA.

My journey began as a Computer Science Teacher and Computer Technician at Biopharcam, a computer sales company in my home country. Upon relocating to the United States, I joined Allied Universal, gradually advancing from an officer to a shift lead position. In this role, I oversaw various applications for training and operational tasks, collaborating closely with engineers and developers from Digital Realty, a data center provider.

Observing these professionals at work sparked my interest in cloud computing, leading me to pursue cloud classes, attend boot camps, and conduct in-depth research across various domains, including training at CloudSpace Academy. This exposure deepened my passion for IT and motivated me to transition into the industry to further my personal growth and contribute to organizational success.

As a Cloud Consultant, I have been privileged to collaborate with Cloud Solution Architects and DevOps teams on numerous projects, consistently exceeding customer expectations through our dedication and innovative solutions. My tenure in this role has enriched my skill set and broadened my professional experience.

Now, equipped with a wealth of knowledge in cloud computing and DevOps practices, I am eager to apply my expertise to new challenges and opportunities. I am confident in my ability to contribute effectively to your knowledge

Thank you for considering my hard work. I look forward to have you on board of the learning process.

GitOps-Powered EKS: Automating Kubernetes Deployments with Terraform, ArgoCD, and Monitoring Tools accessible via Load balancer

🚀 Overview:

Managing Kubernetes infrastructure and applications in production requires a repeatable, scalable, and secure approach. This is where Infrastructure as Code (IaC) combined with GitOps shines. By merging Terraform’s declarative infrastructure provisioning with ArgoCD’s continuous delivery, you can achieve a fully automated deployment pipeline that is both auditable and resilient.

In this article, we will walk through an end-to-end solution for launching a private AWS EKS cluster integrated with essential ecosystem tools—ArgoCD for GitOps, and Prometheus & Grafana for observability—using Terraform and Helm. Whether you are building a new cloud-native platform or refining an existing workflow, this project provides a production-grade blueprint to manage both your cloud resources and Kubernetes workloads from a single, version-controlled codebase.

🔧 Problem Statement

This project is designed to offer a complete, modular solution with the following highlights:

Private EKS Cluster: Deployed within a secure, isolated Virtual Private Cloud (VPC) to enhance security.

Infrastructure as Code (IaC): The entire environment—from networking to cluster configuration—is defined and managed using Terraform, ensuring consistency and repeatability.

Helm Integration: Leverages Helm charts to deploy and manage ArgoCD, Prometheus, and Grafana, simplifying complex Kubernetes application lifecycle management.

GitOps-Ready: ArgoCD continuously synchronizes your cluster state with your Git repository, enabling declarative, pull-based deployments.

Modular Design: The Terraform configuration is broken into reusable modules (

vpc-ec2,eks), making it easy to extend or adapt for different environments.Built-in Observability: Prometheus and Grafana are deployed and exposed via internet-facing load balancers, providing immediate insight into cluster and application health.

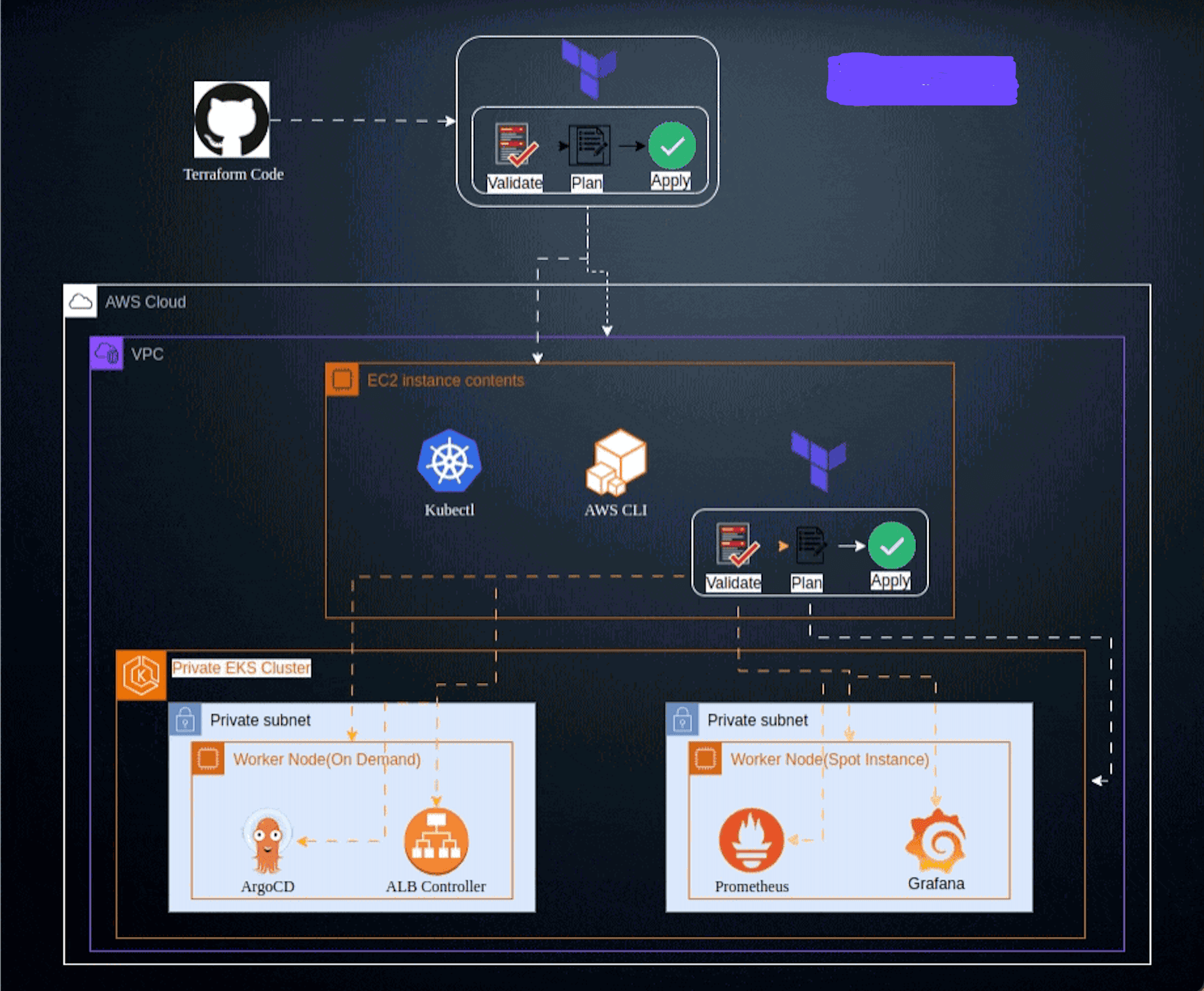

✨ Architecture Overview

The deployment is structured in two main phases, each encapsulated in its own Terraform root module:

Network and Admin Host (

vpc-ec2/): This module provisions the foundational network resources, including a VPC with public and private subnets across three availability zones, an Internet Gateway, NAT Gateway, and route tables. It also deploys a helper EC2 instance (accessible via AWS Systems Manager Session Manager) that acts as an administration host for interacting with the EKS cluster.EKS Cluster and Add-ons (

eks/): This module depends on the network layer and builds the Kubernetes environment. It creates the EKS control plane with both public and private API endpoint access, launches managed node groups (including a Spot Instance pool for cost savings), and installs core add-ons. Finally, it uses the Terraform Helm provider to install ArgoCD, Prometheus, and Grafana directly into the cluster, each exposed via its own internet-facing AWS Network Load Balancer (NLB).

The following diagram illustrates the relationship between these components and the flow of traffic from end users to the internal services.

📌 Architecture Diagram

text

+-------------------+

| User/Admin |

+---------+---------+

|

+-----------------------+-----------------------+

| | |

v v v

+------------------+ +------------------+ +------------------+

| ArgoCD NLB | | Grafana NLB | | Prometheus NLB |

| (Port 443/80) | | (Port 80) | | (Port 9090) |

+--------+---------+ +--------+---------+ +--------+---------+

| | |

+-----------------------+-----------------------+

|

+---------+---------+

| AWS EKS Cluster |

| (Private API) |

+---------+---------+

|

+-------------------------+-------------------------+

| | |

+--------+--------+ +--------+--------+ +--------+--------+

| argocd NS | | prometheus NS | | Worker Nodes |

| (ArgoCD Pods) | | (Prom/Grafana) | | (On-Demand+Spot)|

+-----------------+ +-----------------+ +-----------------+

🌟 Project Requirements

Before you begin, ensure you have the following tools installed and configured on your local machine:

Terraform: Version

1.13.xor1.14.x(the configuration is validated for these versions).AWS CLI: Installed and configured with credentials that have sufficient permissions to create EKS clusters, VPCs, EC2 instances, and IAM roles.

kubectl: The Kubernetes command-line tool.

Helm: The Kubernetes package manager.

📋 Getting Started

Follow these steps to clone the repository and deploy the complete environment. All configuration values are provided via committed terraform.tfvars files, allowing you to run terraform apply directly within each module directory.

Step 1: Clone the Repository

Start by cloning the project from GitHub and navigating into the directory:

bash

git clone https://github.com/Joebaho/ArgoCD-EKS-LB-Terraform.git

cd ArgoCD-EKS-LB-Terraform

Step 2: Deploy the Network and Helper EC2 Instance

This step provisions the VPC, subnets, gateways, and a dedicated administration EC2 instance that will be used to manage the cluster.

bash

cd vpc-ec2/

terraform init

terraform validate

terraform plan

terraform apply -auto-approve

Once the deployment completes, connect to the helper EC2 instance using AWS Systems Manager (SSM). This instance is pre-configured with the necessary IAM role to act as your administration host. After connecting, configure your AWS credentials on the instance:

bash

aws configure

aws sts get-caller-identity

Step 3: Deploy the EKS Cluster and Platform Add-ons

From your administration host (or from your local machine if you have direct network access), deploy the EKS cluster and all Kubernetes add-ons.

bash

cd ../eks/

terraform init

terraform validate

terraform plan

terraform apply -auto-approve

The EKS cluster is configured with a mix of On-Demand and Spot node groups to balance reliability and cost. In this example, we use t3a.medium instances for On-Demand capacity and a variety of c5a, m5a, and t3a instance types for the Spot pool. The deployment also installs essential EKS add-ons, including the VPC CNI, CoreDNS, kube-proxy, and the AWS EBS CSI driver.

Note: The

endpoint-public-accesssetting is enabled in the variables file so that Terraform can communicate with the cluster during provisioning. For a fully private cluster, you can disable this after the deployment is complete.

Step 4: Configure kubectl

After the EKS deployment finishes, update your local kubeconfig to interact with the new cluster:

bash

aws eks update-kubeconfig --region us-west-2 --name dev-medium-eks-cluster

kubectl get nodes

You should see your worker nodes (both On-Demand and Spot) listed.

Step 5: Verify Add-ons are Running

Check that all system pods are healthy and the core services are running:

bash

kubectl get pods -A

kubectl get svc -A

You should see the argocd-server, prometheus-server, and prometheus-grafana services, each with a LoadBalancer type and a pending or assigned external IP address.

✨ Accessing the Deployed Services

Once the eks/ deployment is complete, you can retrieve the public endpoints for ArgoCD, Grafana, and Prometheus.

Accessing ArgoCD

Get the Load Balancer Address:

bash

kubectl -n argocd get svc argocd-server -o jsonpath="{.status.loadBalancer.ingress[0].hostname}{.status.loadBalancer.ingress[0].ip}" && echoOpen the returned address in your browser:

http://<EXTERNAL-IP-OR-HOSTNAME>.Retrieve the Initial Admin Password:

bash

kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 --decode && echoUse

adminas the username and the decoded password to log in.

Accessing Grafana

Find the Grafana Service:

bash

kubectl -n prometheus get svc -o wide | grep grafanaGet the External Address:

bash

kubectl -n prometheus get svc prometheus-grafana -o jsonpath="{.status.loadBalancer.ingress[0].hostname}{.status.loadBalancer.ingress[0].ip}" && echoOpen the address in your browser. The default Grafana credentials are

admin/prom-operator.

Accessing Prometheus

List Prometheus Services:

bash

kubectl -n prometheus get svc -o wide | grep prometheusFetch the External Address:

bash

kubectl -n prometheus get svc prometheus-kube-prometheus-prometheus -o jsonpath="{.status.loadBalancer.ingress[0].hostname}{.status.loadBalancer.ingress[0].ip}" && echoOpen the address in your browser to access the Prometheus expression browser and target explorer.

Note: If the external addresses remain in

Pendingstate, the AWS Load Balancer may still be provisioning. Wait a few minutes and run the commands again.

✨ Under the Hood: How It Works

Understanding the inner workings of this setup helps in customizing it for your own needs.

1 - Modular Terraform Configuration

The project is divided into two root modules, each with a clear responsibility:

vpc-ec2/: This module uses a custom local module (../module/vpc-ec2) to create the network foundation. It defines the VPC CIDR block (e.g.,10.16.0.0/16), public and private subnets, Internet Gateway, NAT Gateway, and route tables. It also provisions an EC2 instance with an IAM role that grants SSM access, allowing you to connect to it without needing a public IP address or SSH key.eks/: This module calls another custom local module (../module/eks) to create the EKS cluster. The cluster uses the private subnets for its node groups, ensuring workloads run in isolated network space. The module configures the EKS control plane with both public and private API endpoints, creates IAM roles for the cluster and node groups, and launches two node groups: one for On-Demand instances and one for Spot instances. EKS add-ons are installed using theaws_eks_addonresource.

2 - Deploying Add-ons with Helm and the AWS Load Balancer Controller

After the EKS cluster is running, the eks/ module uses the Terraform Helm provider to install essential software:

AWS Load Balancer Controller: This controller is required to provision AWS Network Load Balancers (NLBs) for Kubernetes services of type

LoadBalancer. The module creates a dedicated IAM policy and role with OIDC federation, allowing the controller to make AWS API calls. It then installs the controller via its official Helm chart.ArgoCD: Installed using the

argo-cdHelm chart from the Argo project repository. The chart is configured to create a service of typeLoadBalancerand annotates it to be internet-facing. This exposes the ArgoCD UI directly via an NLB.Prometheus and Grafana: The

kube-prometheus-stackHelm chart deploys a full monitoring solution. The chart values are overridden to set thegrafana.service.typeandprometheus.service.typetoLoadBalancer, also making them internet-facing.

This layered approach—using Helm to deploy complex applications within Kubernetes—is a powerful pattern for managing platform services.

3 - State Management

Both root modules use an S3 backend to store their Terraform state files. The state is stored in a bucket named baho-backup-bucket in the us-west-2 region, with different keys for each module (vpc-ec2.tfstate and eks.tfstate). This setup enables team collaboration and provides a safe, durable location for your infrastructure state.

✨ Customization and Best Practices

This project is designed to be a foundation that you can adapt for your own use cases. Here are a few suggestions for customization:

Adjust Instance Types: The

eks/terraform.tfvarsfile defines the instance types for On-Demand and Spot node groups. Modify these lists to suit your workload requirements and budget.Enable Private API Endpoint: If you want a more secure cluster, set

endpoint-public-access = falsein theeks/terraform.tfvarsfile after you have established a private network path to the cluster (e.g., via a VPN or Direct Connect).Configure ArgoCD with Ingress: The current configuration exposes ArgoCD via a LoadBalancer service. For production environments, consider using an Ingress controller (like the AWS Load Balancer Controller) to manage access with SSL termination and routing rules.

Integrate with Git: To fully utilize GitOps, configure ArgoCD to point to your application repository. You can define applications declaratively and let ArgoCD sync them automatically.

✨ Troubleshooting

Load Balancer Pending: If the external IP for a service remains pending, check that the AWS Load Balancer Controller is running correctly:

bash

kubectl get pods -n aws-loadbalancer-controller kubectl logs -n aws-loadbalancer-controller -l app.kubernetes.io/name=aws-load-balancer-controllerArgoCD Login Failed: Ensure you are using the correct decoded password. The secret can sometimes take a few minutes to be created after the Helm release.

Cannot Connect to EKS Cluster: If you get an error from

kubectl, verify that your AWS credentials are still valid and that you have configured the kubeconfig with the correct cluster name and region.Destroy Order: Always destroy the resources in the reverse order of creation. First destroy the

eks/stack, then thevpc-ec2/stack to avoid dependency errors.

✨ Cleaning Up

To avoid ongoing charges, destroy the infrastructure when it is no longer needed. Run the following commands from the repository root:

bash

cd eks/

terraform destroy -auto-approve

cd ../vpc-ec2/

terraform destroy -auto-approve

If you want to review the resources that will be deleted beforehand, you can run terraform plan -destroy in each directory.

🔗 Conclusion

This project demonstrates a production-ready approach to deploying a secure, scalable, and observable Kubernetes environment on AWS. By leveraging Terraform for infrastructure provisioning and ArgoCD for GitOps-driven application delivery, you establish a foundation that is not only automated but also inherently auditable and resilient.

The combination of a private EKS cluster, a helper administration host, and integrated monitoring tools provides a complete platform that can serve as the starting point for your cloud-native journey. As you continue to build on this foundation, you can extend it with additional Helm charts, configure ArgoCD for application synchronization, and implement more advanced networking patterns.

🤝 Contributing

Your perspective is valuable! Whether you see potential for improvement or appreciate what's already here, your contributions are welcomed and appreciated. Thank you for considering joining us in making this project even better. Feel free to follow me for updates on this project and others, and to explore opportunities for collaboration. Together, we can create something amazing!

Contributions are welcome 🚀

If you’d like to improve this project:

Fork the repository

Create a feature branch

Submit a pull request

Ideas for contributions:

Add private subnet + NAT architecture

Introduce Ansible roles

Add CI/CD validation

Extend to multi-region deployments

📄 License

This project is licensed under the JoebahoCloud License